By: Ryan Thorngren, Lei Gioia, Carolyn Zhang

We show by a counting argument that even though translation symmetry admits symmetric short-range entangled (SRE) eigenstates, there are not enough such SRE eigenstates to span the zero momentum sector. This means that the fixed point strong-to-weak spontaneous symmetry breaking state of translation symmetry is long-range entangled: it cannot be written as a mixture of SRE states. This is a subtle form of long-range entanglement in mixed stat... more

We show by a counting argument that even though translation symmetry admits symmetric short-range entangled (SRE) eigenstates, there are not enough such SRE eigenstates to span the zero momentum sector. This means that the fixed point strong-to-weak spontaneous symmetry breaking state of translation symmetry is long-range entangled: it cannot be written as a mixture of SRE states. This is a subtle form of long-range entanglement in mixed states that cannot be detected by long-range connected correlation functions. less

By: Yuzhen Zhang, Isaac H. Kim, Yimu Bao, Sagar Vijay

We show that the low-energy states of non-Abelian topological orders possess extensive magic which is long-ranged, and cannot be eliminated by a constant-depth local unitary circuit. This refines conventional notions of complexity beyond the linear circuit depth which is required to prepare any topological phase, and provides a new resource-theoretic characterization of topological orders. A central technical result is a no-go theorem establi... more

We show that the low-energy states of non-Abelian topological orders possess extensive magic which is long-ranged, and cannot be eliminated by a constant-depth local unitary circuit. This refines conventional notions of complexity beyond the linear circuit depth which is required to prepare any topological phase, and provides a new resource-theoretic characterization of topological orders. A central technical result is a no-go theorem establishing that stabilizer states--even up to constant-depth local unitarie--cannot approximate low-energy states of non-Abelian string-net models which satisfy the entanglement bootstrap axioms. Moreover, we show that stabilizer-realizable Abelian string-net phases have mutual braiding phases quantized by the on-site qudit dimension, and that any violation of this condition necessarily implies extensive long-range magic. Extending to higher spatial dimensions, we argue that any state obeying an entanglement area law and hosting excitations with nontrivial fusion spaces must exhibit extensive long-range magic. This applies, in particular, to ground-states and low-energy states of higher-dimensional quantum double models. less

By: Leonardo A. Lessa, Tsung-Cheng Lu

We present a new mechanism for long-range entanglement (LRE) in strongly symmetric many-body mixed states that does not rely on symmetry anomalies or long-range correlations. Our primary example is the maximally mixed state in the translation-invariant subspace on a one-dimensional ring. This state is LRE because translationally symmetric short-range entangled states span a subspace whose dimension grows only polynomially with system size, wh... more

We present a new mechanism for long-range entanglement (LRE) in strongly symmetric many-body mixed states that does not rely on symmetry anomalies or long-range correlations. Our primary example is the maximally mixed state in the translation-invariant subspace on a one-dimensional ring. This state is LRE because translationally symmetric short-range entangled states span a subspace whose dimension grows only polynomially with system size, whereas the full translation-invariant subspace grows exponentially. We further discuss certain unconventional properties of this state, including logarithmically growing conditional mutual information, strong-to-weak spontaneous symmetry-breaking, and Rényi-index-dependent operator-space entanglement. We also construct a geometrically non-local Lindbladian to stabilize this state as the steady state. Our results identify dimensional mismatch as a novel route to LRE that is intrinsic to many-body mixed states. less

By: Jinchang Liu, Elias X. Huber, Zhenyu Du, Xingjian Zhang, Xiongfeng Ma

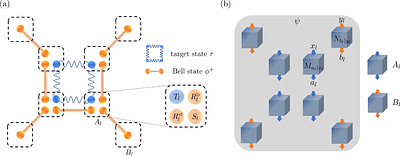

Characterizing large quantum systems with minimal assumptions is a central challenge in quantum information science. Self-testing provides the strongest form of certification by identifying the underlying quantum state solely from observed measurement statistics. However, existing self-testing methods for generic $n$-partite states face a scalability barrier, requiring exponentially many samples in the system size. In this work, we overcome t... more

Characterizing large quantum systems with minimal assumptions is a central challenge in quantum information science. Self-testing provides the strongest form of certification by identifying the underlying quantum state solely from observed measurement statistics. However, existing self-testing methods for generic $n$-partite states face a scalability barrier, requiring exponentially many samples in the system size. In this work, we overcome this barrier by introducing a protocol that robustly self-tests almost all $n$-qubit states with only polynomial sample complexity. The key ingredient is an efficient scheme for device-independently evaluating multipartite Pauli measurements, which can be implemented using only a linear number of ancillary Bell pairs together with standard projective and Bell measurements, well within the reach of current quantum technology. Beyond self-testing states, our scheme provides a general framework for implementing a wide range of learning and certification protocols in the device-independent setting, thereby opening a scalable route to device-independent quantum information processing in large-scale quantum networks. less

By: Ludovico Lami, Bartosz Regula, Ryuji Takagi

The performance of quantum resource manipulation protocols, including key examples such as distillation of quantum entanglement, is measured in terms of the rate at which desired target states can be produced from a given noisy state. However, to achieve optimal rates, known protocols require precise tailoring to the quantum state in question, demanding a perfect knowledge of the input and allowing no errors in its preparation. Here we show t... more

The performance of quantum resource manipulation protocols, including key examples such as distillation of quantum entanglement, is measured in terms of the rate at which desired target states can be produced from a given noisy state. However, to achieve optimal rates, known protocols require precise tailoring to the quantum state in question, demanding a perfect knowledge of the input and allowing no errors in its preparation. Here we show that distillation of quantum resources in the framework of resource non-generating operations can be performed universally: optimal rates of distillation can be achieved with no knowledge of the input state whatsoever, certifying the robustness of quantum resource distillation. The findings apply in particular to the purification of quantum entanglement under non-entangling maps, where the optimal rates are governed by the regularised relative entropy of entanglement. Our result relies on an extension of the generalised quantum Stein's lemma in quantum hypothesis testing to a composite setting where the null hypothesis is no longer a fixed quantum state, but is rather composed of i.i.d. copies of an unknown state. The solution of this asymptotic problem is made possible through new developments in one-shot quantum information and a refinement of the blurring technique from [Lami, arXiv:2408.06410]. less

By: Patrycja Tulewicz, Karol Bartkiewicz, Franco Nori

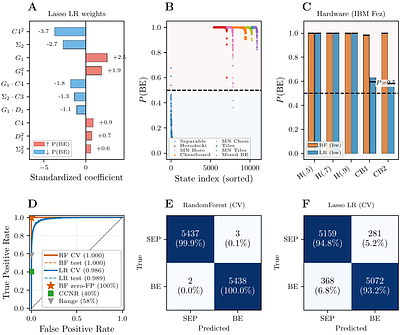

Entanglement can hide in two fundamentally different ways. First, multi-copy correlations can carry information that no single-copy measurement on an unknown state is able to access. Second, bound entangled states possess a positive partial transpose, which makes them invisible to the Peres-Horodecki criterion and all moment inequalities that depend on it. Here we show that the moment difference between the partial transpose and purity decomp... more

Entanglement can hide in two fundamentally different ways. First, multi-copy correlations can carry information that no single-copy measurement on an unknown state is able to access. Second, bound entangled states possess a positive partial transpose, which makes them invisible to the Peres-Horodecki criterion and all moment inequalities that depend on it. Here we show that the moment difference between the partial transpose and purity decomposes exactly as a chirality-chirality correlator, where the relevant operator is the scalar spin chirality -- the same quantity that governs chiral spin liquids and the topological Hall effect. This decomposition identifies the specific physical structure that multi-copy entanglement detection probes. Using the same controlled-SWAP circuits, we develop a multi-channel spectral classifier for bound entanglement. The classifier combines realignment spectral features with chirality corrections and achieves 99.9% recall at zero false positives across all three known 3x3 bound entangled families, compared with ~40% for the CCNR criterion alone. We also introduce a marginal-noise construction that produces CCNR-invisible bound entangled states, which the classifier detects but which remain invisible to all single-parameter criteria. We validate our approach experimentally on three IBM Quantum processors and demonstrate negativity reconstruction with mean errors of 0.002-0.027, chirality detection for pure and mixed entangled states, and bound entanglement detection across two structurally distinct families (Horodecki and chessboard) on a single gate-based superconducting processor. less

By: Michael R. Geller, Victoria S. Ordonez, Yohannes Abate

We consider an extended model of quantum computation where a scalable fault-tolerant quantum computer is coupled to one or more ancilla qubits that evolve according to a nonlinear Schrödinger equation. Following the approach of Abrams and Lloyd, an efficient quantum circuit evaluating an $n$-bit Boolean function in conjunctive normal form is used to prepare an ancilla encoding its number $s$ of satisfying assignments ($0 \le s \le 2^n$). This... more

We consider an extended model of quantum computation where a scalable fault-tolerant quantum computer is coupled to one or more ancilla qubits that evolve according to a nonlinear Schrödinger equation. Following the approach of Abrams and Lloyd, an efficient quantum circuit evaluating an $n$-bit Boolean function in conjunctive normal form is used to prepare an ancilla encoding its number $s$ of satisfying assignments ($0 \le s \le 2^n$). This is followed by a nonlinear quantum state discrimination gate on the ancilla qubit that is used to learn properties of $s$. Here we consider three types of state discriminators generated by different nonlinear Hamiltonians. First, given a restricted Boolean satisfiability problem with the promise of at most one satisfying assignment ($ 0 \le s \le 1$), we show that a qubit with $\langle σ^z \rangle σ^z$ nonlinearity can be used to efficiently determine whether $s = 0$ or $s = 1$, solving the UNIQUE SAT problem. Here $\langle A \rangle := \langle ψ| A |ψ\rangle $ denotes expectation in the current state. UNIQUE SAT is NP-hard under a randomized polynomial-time reduction (of course any discussion of complexity assumes a scalable, fault-tolerant implementation). Second, for unrestricted satisfiability problems with $ 0 \le s \le 2^n$, a Hamiltonian with $ \langle σ^x \rangle σ^y - \langle σ^y \rangle σ^x$ nonlinearity can be used to efficiently determine whether $s=0$ or $s>0$, thereby solving 3SAT, which is NP-complete. Finally, we show that $ \langle σ^y \rangle \langle σ^z \rangle σ^x - \langle σ^x \rangle \langle σ^z \rangle σ^y $ nonlinearity can be used to efficiently measure $s$ and solve #SAT, which is #P-complete. The nonlinear models are of mean field type and might be simulated with ultracold atoms. less

By: Zubin Zheng, Jiahao Wu, Shengcai Liu

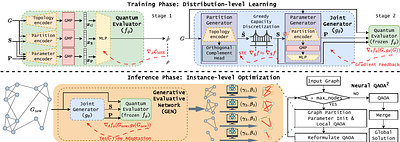

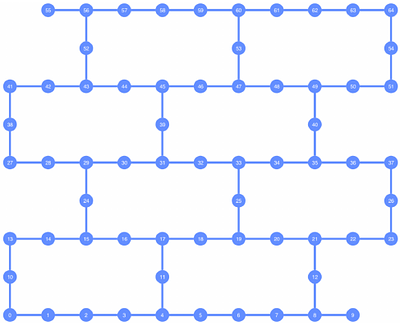

The quantum approximate optimization algorithm (QAOA) holds promise for combinatorial optimization but is constrained by limited qubits. While divide-and-conquer frameworks like QAOA$^{2}$ address scalability by partitioning graphs into subgraphs, existing methods suffer from two fundamental limitations: i) misalignment between heuristic partitioning metrics and quantum optimization goals, and ii) topology-blind parameter initialization that ... more

The quantum approximate optimization algorithm (QAOA) holds promise for combinatorial optimization but is constrained by limited qubits. While divide-and-conquer frameworks like QAOA$^{2}$ address scalability by partitioning graphs into subgraphs, existing methods suffer from two fundamental limitations: i) misalignment between heuristic partitioning metrics and quantum optimization goals, and ii) topology-blind parameter initialization that leads to optimization cold starts. To bridge these gaps, we propose Neural QAOA$^{2}$, an end-to-end differentiable framework that jointly generates graph partitions and initial parameters. By integrating a generative evaluative network (GEN), our method utilizes a differentiable quantum evaluator as a high-fidelity performance surrogate to provide direct gradient guidance, enabling the joint generator to learn the intrinsic mapping from graph topology to high-quality partition and parameter configurations. Extensive experiments on 183 QUBO, Ising, and MaxCut instances (21 to 1000 variables) demonstrate that our gradient-driven approach broadly outperforms heuristic baselines, ranking first on 101 instances. It exhibits zero-shot generalization across out-of-distribution graph topologies and scales. less

By: Ankit Kulshrestha, Xiaoyuan Liu

A quantum compiler is a critical piece in the quantum computing pipeline since it allows an abstract quantum circuit to be run on a physical quantum computer. One extremely important subproblem in quantum compilation is the generation of a logical to physical qubit mapping. Typically in quantum compilers this step is either implemented as a random or a heuristic based assignment that aims to minimize additional (SWAP) gate overhead in the qua... more

A quantum compiler is a critical piece in the quantum computing pipeline since it allows an abstract quantum circuit to be run on a physical quantum computer. One extremely important subproblem in quantum compilation is the generation of a logical to physical qubit mapping. Typically in quantum compilers this step is either implemented as a random or a heuristic based assignment that aims to minimize additional (SWAP) gate overhead in the quantum circuit. In this paper, we present an alternative approach to solving the qubit mapping problem. Specifically, we formulate the qubit mapping problem with a combinatorial optimization (CO) objective. We then present a method to find a solution to the CO problem by training a reinforcement learning (RL) policy. We also propose a local search based post-processing algorithm to further reduce the overhead. Our results show a dramatic improvement over conventional techniques in reducing the number of SWAPs. On different real world datasets like MQTBench and Queko circuits, our trained policy achieves a \textbf{65-85\%} reduction in SWAP overhead when compared to existing quantum compilers. less

By: Álvaro Navarrete, Guillermo Currás-Lorenzo, Margarida Pereira, Marcos Curty

Quantum key distribution (QKD) promises information-theoretic security based on quantum mechanics and idealized device models. Practical implementations, however, deviate from these models due to unavoidable device imperfections, and existing security proofs fall short of capturing the complexity of real-world systems. Here we introduce a versatile numerical finite-key security framework valid against general coherent attacks and applicable t... more

Quantum key distribution (QKD) promises information-theoretic security based on quantum mechanics and idealized device models. Practical implementations, however, deviate from these models due to unavoidable device imperfections, and existing security proofs fall short of capturing the complexity of real-world systems. Here we introduce a versatile numerical finite-key security framework valid against general coherent attacks and applicable to a broad class of practical QKD setups. It accommodates most relevant imperfections at both transmitter and receiver, including non-independent-and-identically-distributed (non-IID) signals arising in high-speed QKD systems due to the limited bandwidth of optical modulators, while requiring only partial characterization of the apparatuses. We demonstrate the power of our framework by proving the security of a realistic decoy-state QKD implementation with laser sources, providing a practical route towards rigorous security certification of real-world QKD setups. less