A Foundation Model for Cell Segmentation

A Foundation Model for Cell Segmentation

Israel, U.; Marks, M.; Dilip, R.; Li, Q.; Schwartz, M. S.; Pradhan, E.; Pao, E.; Li, S.; Pearson-Goulart, A.; Perona, P.; Gkioxari, G.; Barnowski, R.; Yue, Y.; Van Valen, D. A.

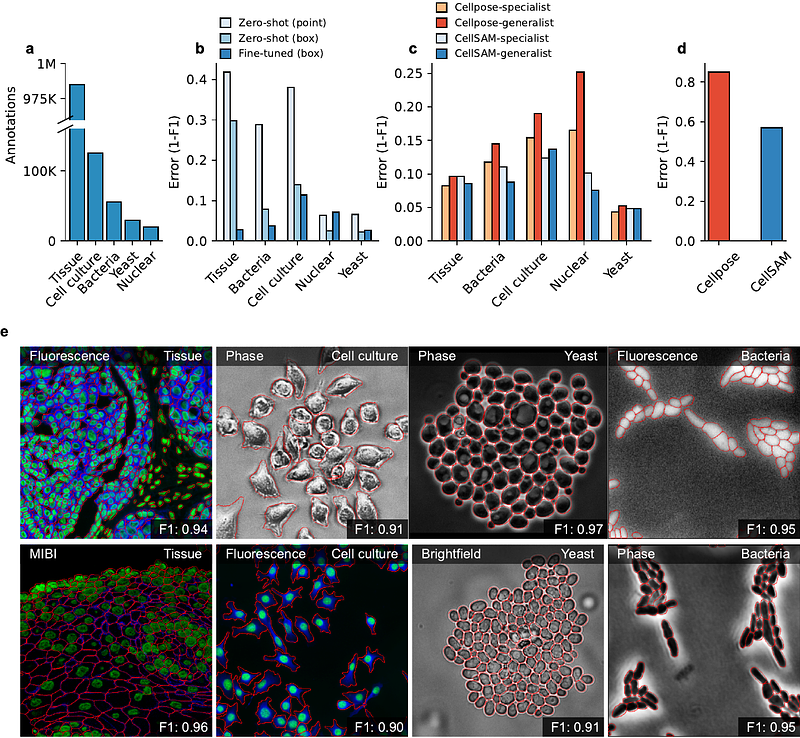

AbstractCells are the fundamental unit of biological organization, and identifying them in imaging data - cell segmentation - is a critical task for various cellular imaging experiments. While deep learning methods have led to substantial progress on this problem, models that have seen wide use are specialist models that work well for specific domains. Methods that have learned the general notion of \"what is a cell\" and can identify them across different domains of cellular imaging data have proven elusive. In this work, we present CellSAM, a foundation model for cell segmentation that generalizes across diverse cellular imaging data. CellSAM builds on top of the Segment Anything Model (SAM) by developing a prompt engineering approach to mask generation. We train an object detector, CellFinder, to automatically detect cells and prompt SAM to generate segmentations. We show that this approach allows a single model to achieve state-of-the-art performance for segmenting images of mammalian cells (in tissues and cell culture), yeast, and bacteria collected with various imaging modalities. To enable accessibility, we integrate CellSAM into DeepCell Label to further accelerate human-in-the-loop labeling strategies for cellular imaging data. A deployed version of CellSAM is available at \\href{https://label-dev.deepcell.org/}{https://label-dev.deepcell.org/}.