Domain-adaptation deep learning models do not outperform simple baseline models in single-cell anti-cancer drug sensitivity prediction

Domain-adaptation deep learning models do not outperform simple baseline models in single-cell anti-cancer drug sensitivity prediction

Esteban-Medina, M.; Bohl, M.; Beerenwinkel, N.; Lenhof, K.

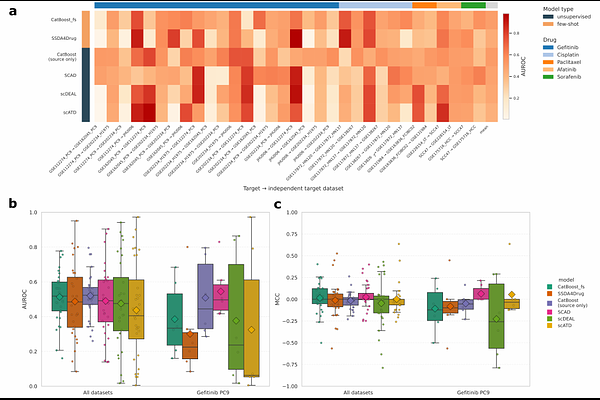

AbstractTumor drug response is profoundly shaped by cellular heterogeneity, making single-cell resolution essential for precision oncology. While drug-response labels are abundant for cell lines at bulk resolution, translating these predictive models to the single-cell level requires effective domain adaptation strategies. Motivated by advances in computer vision, recent deep-learning domain adaptation methods promise to transfer knowledge from bulk (source) to single-cell (target) data without the need for target labels. However, their true translational utility remains unclear due to a lack of rigorous evaluation against non-adaptive baselines across diverse biological and technical contexts. Here, we present a comprehensive benchmark comparing four representative domain adaptation methods against two simple gradient boosting baseline methods. Through systematic evaluation across 19 single-cell datasets and 10 drugs, we show that none of the complex adaptation methods outperforms the simpler baselines. By analyzing the drivers of model performance, we find that target-informed hyperparameter tuning and sparse label supervision are the principal sources of prediction gain. Our study reveals that current approaches fail to bridge the bulk-to-single-cell conceptual shift and provides a unified codebase and comprehensive data collection to facilitate robust model comparisons. By enabling transparent evaluation and robust benchmarking against simple models, this resource aims to accelerate future developments in translational pharmacogenomics.