Quantifying Scientific Consensus in Biomedical Hypotheses via LLM-Assisted Literature Screening

Quantifying Scientific Consensus in Biomedical Hypotheses via LLM-Assisted Literature Screening

Kim, U.; Kwon, O.; Lee, D.

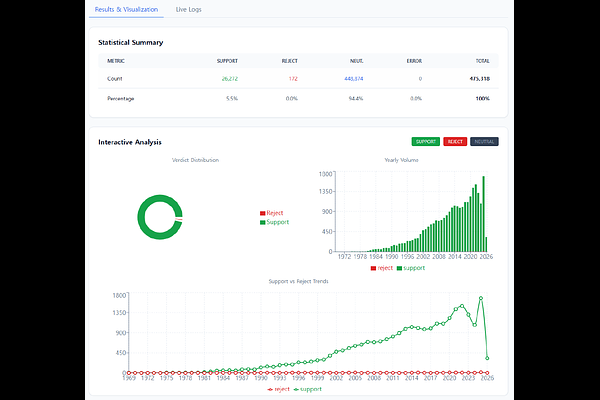

AbstractSystematic literature reviews are labor-intensive tasks in biomedical research. While Large Language Models (LLMs) using Retrieval-Augmented Generation (RAG) techniques have enhanced information accessibility, the inherent complexity of biological systems---characterized by high context dependency and conflicting data---remains a primary driver of LLM hallucinations. This imposes a structural constraint that limits the precision of evidence synthesis. To address these limitations, we propose an automated framework designed for the exhaustive identification of supporting and contradictory evidence within a target literature set. Rather than relying on a model's pre-trained knowledge, our system requires the LLM to review each paper individually to determine its alignment with a specific research hypothesis. By evaluating semantic context, the framework captures subtle contradictions that are often overgeneralized by conventional methods. The framework's performance was validated using the BioNLI task, where it demonstrated high classification accuracy in distinguishing whether evidence supports or contradicts a given hypothesis. Notably, the implementation of an ensemble approach provided superior stability and slightly higher precision compared to individual models. Furthermore, the framework exhibited robust performance across several well-established biological hypotheses, confirming its practical utility and reliability in real-world research. This approach provides a rigorous basis for biomedical discovery by enabling the precise, systematic analysis of biological literature and the robust collection of evidence.