scDynOmics: An Optimized Transformer Model for Representation Learning from Single-Cell Multiomics

scDynOmics: An Optimized Transformer Model for Representation Learning from Single-Cell Multiomics

Yu, G.; Ramnarine, T. J. S.; Klughammer, J.; Mages, S. W.

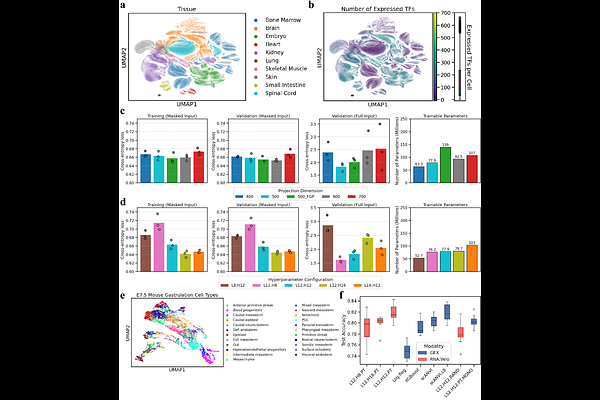

AbstractAs foundation models have become increasingly prevalent in several fields for multiple purposes, pretraining models with single-cell transcriptomic data has gained significant interest. Although existing single-cell foundation models have shown remarkable performance in various biological tasks, the challenge of representing multimodal single-cell data and efficiently tuning large pretrained models for diverse downstream applications remains largely unaddressed. Here, we introduce scDynOmics, a pretrainable transformer for representation learning from multimodal single-cell data. The model is motivated by gene regulatory networks and adopts a Linformer-style attention mechanism to scale to coding-genome wide multimodal inputs. Pretraining on paired single-cell transcriptomic and chromatin accessibility profiles yields compact embeddings that represent cellular states and developmental dynamics. For versatile application, scDynOmics employs low-rank adaptation modules, enabling parameter-efficient fine-tuning for downstream tasks. We demonstrate that scDynOmics achieves state-of-the-art performance in cellular classification and reveals interpretable factors driving developmental trajectories and perturbation responses. Overall, scDynOmics is a scalable, interpretable framework for cellular representation learning and deciphering cellular heterogeneity and dynamics.