Predicting Drosophila Body Orientation from a Translational Trajectory using an Artificial Neural Network

Predicting Drosophila Body Orientation from a Translational Trajectory using an Artificial Neural Network

Mangat, N.; May, C. E.; Nagel, K. I.; van Breugel, F.

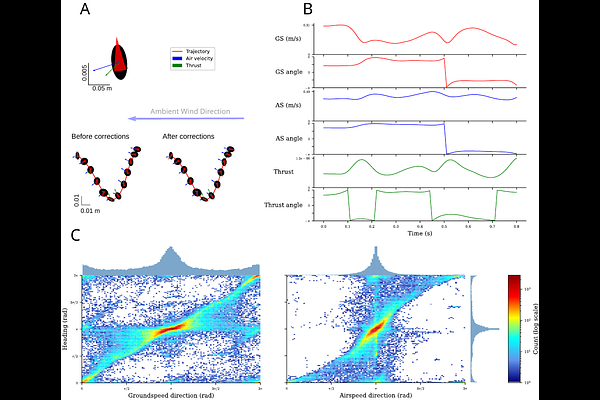

AbstractBody orientation is a key variable in the analysis of insect flight behavior, yet it remains difficult to measure across the full extent of a trajectory in most experimental settings. Although modern tracking systems reliably capture the position and velocity of the center of mass, resolving body yaw orientation typically requires dedicated hardware confined to a small, purpose-built volume, and is impractical for large-scale or long-duration studies. Here, we develop a data-driven estimator that predicts body yaw orientation directly from translational flight trajectory data. We trained a fully connected feedforward artificial neural network (ANN) on a dataset in which both flight trajectory and body orientation were recorded simultaneously in freely flying \textit{Drosophila}, using a time-delay embedding of ground velocity, air velocity, and inferred thrust vectors as input features. To improve generalization across arbitrary coordinate frames, we augmented the training data with random rotational transformations. Evaluated on a withheld test set of 3,313 trajectories (101,576 frames), the rotation-augmented model achieved a median mean absolute angular error of 10.51 degrees, with accurate heading recovery across the full [pi,-pi) range. The estimator provides a practical tool for recovering body orientation information from existing trajectory datasets in which only center-of-mass motion was recorded, extending the behavioral and computational analysis of insect navigation to previously inaccessible data.