RD-VIO: Robust Visual-Inertial Odometry for Mobile Augmented Reality in Dynamic Environments

RD-VIO: Robust Visual-Inertial Odometry for Mobile Augmented Reality in Dynamic Environments

Jinyu Li, Xiaokun Pan, Gan Huang, Ziyang Zhang, Nan Wang, Hujun Bao, Guofeng Zhang

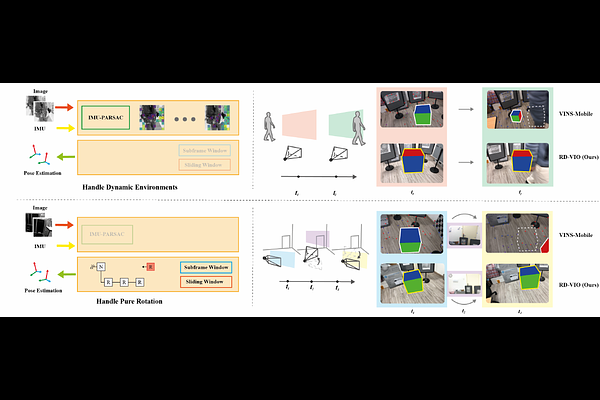

AbstractIt is typically challenging for visual or visual-inertial odometry systems to handle the problems of dynamic scenes and pure rotation. In this work, we design a novel visual-inertial odometry (VIO) system called RD-VIO to handle both of these two problems. Firstly, we propose an IMU-PARSAC algorithm which can robustly detect and match keypoints in a two-stage process. In the first state, landmarks are matched with new keypoints using visual and IMU measurements. We collect statistical information from the matching and then guide the intra-keypoint matching in the second stage. Secondly, to handle the problem of pure rotation, we detect the motion type and adapt the deferred-triangulation technique during the data-association process. We make the pure-rotational frames into the special subframes. When solving the visual-inertial bundle adjustment, they provide additional constraints to the pure-rotational motion. We evaluate the proposed VIO system on public datasets. Experiments show the proposed RD-VIO has obvious advantages over other methods in dynamic environments.