Crossmodal Hierarchical Predictive Coding for Audiovisual Sequences in Human Brain

Crossmodal Hierarchical Predictive Coding for Audiovisual Sequences in Human Brain

Huang, Y. T.; Wu, C.-T.; Fang, Y.-X. M.; Fu, C.-K.; Koike, S.; Chao, Z. C.

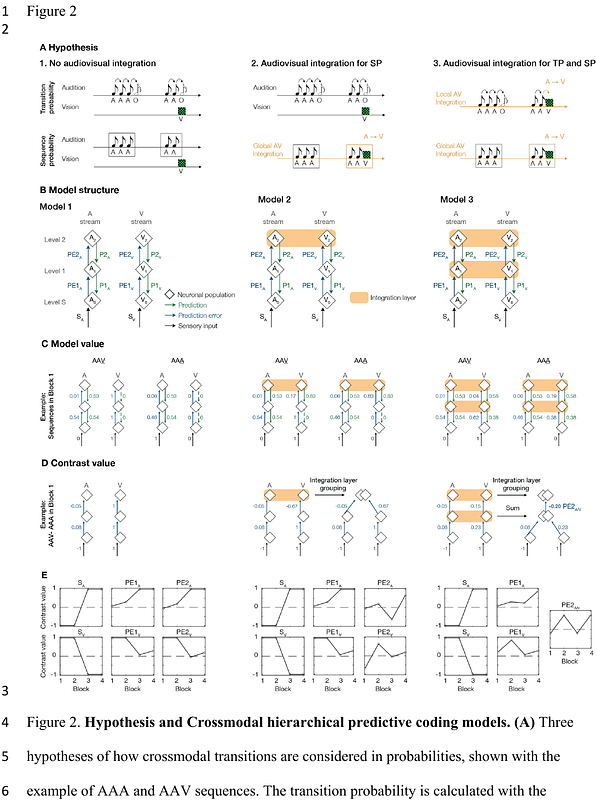

AbstractPredictive-coding theory proposes that the brain actively predicts sensory inputs based on prior knowledge. While this theory has been extensively researched within individual sensory modalities, there is a crucial need for empirical evidence supporting hierarchical predictive processing across different modalities to further generalize the theory. Here, we examine how crossmodal knowledge is represented and learned in the brain by identifying the hierarchical networks underlying crossmodal predictions when information of one sensory modality leads to a prediction in another modality. We record electroencephalogram (EEG) in humans during a crossmodal audiovisual local-global oddball paradigm, in which the predictability of transitions between tones and images are manipulated at two hierarchical levels: stimulus-to-stimulus transition (local level) and multi-stimulus sequence structure (global level). With a model-fitting approach, we decompose the EEG data using three distinct predictive-coding models: one with no audiovisual integration, one with audiovisual integration at the global level, and one with audiovisual integration at both the local and global levels. The best-fitting model demonstrates that audiovisual integration occurs at both levels. This highlights a convergence of auditory and visual information to construct crossmodal predictions, even in the more basic interactions that occur between individual stimuli. Furthermore, we reveal the spatio-spectro-temporal signatures of prediction-error signals across hierarchies and modalities, and show that auditory and visual prediction-error signals are progressively redirected to the central-parietal area of the brain as learning progresses. Our findings unveil a crossmodal predictive coding mechanism, where the unimodal framework is implemented through more distributed brain networks to process hierarchical crossmodal knowledge.