From sequences to schemas: low-rank recurrent dynamics underlie abstract relational representations

From sequences to schemas: low-rank recurrent dynamics underlie abstract relational representations

Boboeva, V.; Pezzotta, A.; Dimitriadis, G.; Akrami, A.

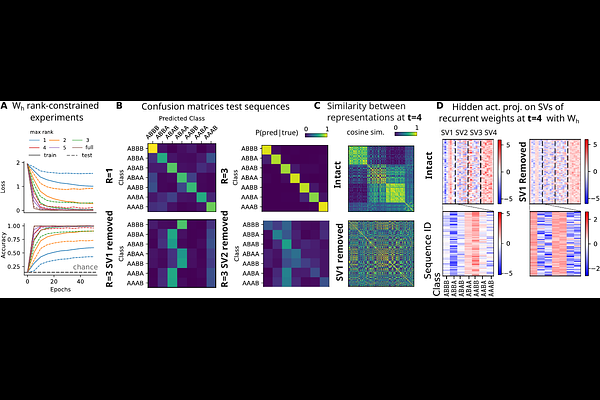

AbstractA hallmark of intelligent behavior is the ability to extract abstract relational structure from temporal sequences, recognizing, for instance, that seq{aab}, seq{ccd}, and seq{eef} all follow the same underlying pattern, regardless of the specific elements involved. This capacity, observed across species and sensory modalities, is thought to underlie the formation of cognitive schemas: compressed internal models that support rapid generalization to novel experiences. Yet the neural circuit mechanisms by which such abstract, identity-independent representations emerge from sequential experience remain largely unknown. Here, we investigate this question using Recurrent Neural Networks (RNNs) as mechanistic models of neural circuits, trained to classify sequences based on their latent algebraic patterns (e.g., seq{aab}, seq{aad} to seq{AAB}; seq{aba}, seq{aca} to seq{ABA}) without supervision on intermediate transitions. We demonstrate that RNNs spontaneously learn low-dimensional representations that mirror the hierarchical generative structure of the sequences, and that this abstraction is mechanistically supported by the emergence of low-rank recurrent connectivity. The leading singular component of the recurrent weight matrix integrates relational transition information, whether consecutive tokens are the same or different, across time, driving the formation of a structured, tree-like geometry in the population state space. Through singular vector ablation, we establish a causal role for this component: removing it selectively erases memory for earlier transitions while leaving local, single-step sensitivity intact. Finally, while RNNs trained on next-token prediction do not spontaneously acquire these abstract representations, transferring the low-rank scaffold learned from classification significantly accelerates learning and improves generalization, an effect specific to the abstract structure of the scaffold rather than generic statistical pretraining. These findings offer a computational account of how task demands shape recurrent connectivity to support temporal abstraction, with direct implications for understanding schema formation in biological brains.