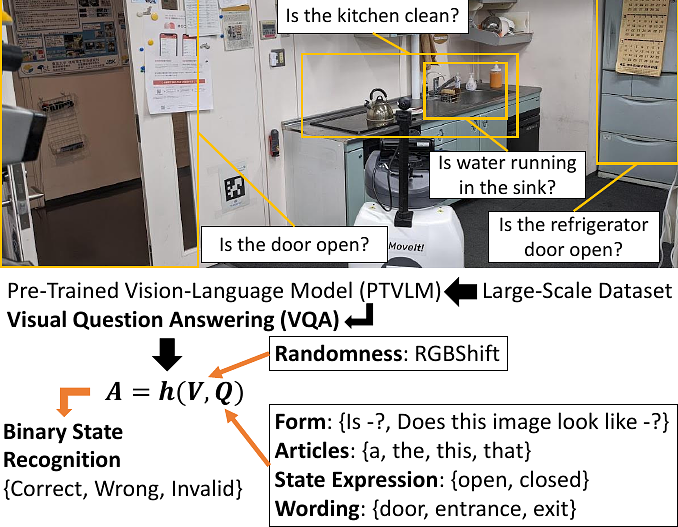

Binary State Recognition by Robots using Visual Question Answering of Pre-Trained Vision-Language Model

Binary State Recognition by Robots using Visual Question Answering of Pre-Trained Vision-Language Model

Kento Kawaharazuka, Yoshiki Obinata, Naoaki Kanazawa, Kei Okada, Masayuki Inaba

AbstractRecognition of the current state is indispensable for the operation of a robot. There are various states to be recognized, such as whether an elevator door is open or closed, whether an object has been grasped correctly, and whether the TV is turned on or off. Until now, these states have been recognized by programmatically describing the state of a point cloud or raw image, by annotating and learning images, by using special sensors, etc. In contrast to these methods, we apply Visual Question Answering (VQA) from a Pre-Trained Vision-Language Model (PTVLM) trained on a large-scale dataset, to such binary state recognition. This idea allows us to intuitively describe state recognition in language without any re-training, thereby improving the recognition ability of robots in a simple and general way. We summarize various techniques in questioning methods and image processing, and clarify their properties through experiments.