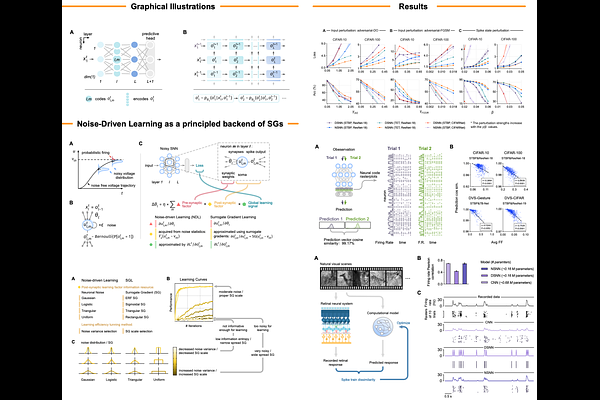

[Patterns] Exploiting Noise as a Resource for Computation and Learning in Spiking Neural Networks

Voice is AI-generated

Description

https://doi.org/10.1016/j.patter.2023.100831

3 comments

scicastboard

Thank you for your submission. Below are a couple of questions on the paper for non-expert audience:

1. How does the NSNN model incorporate noise into spiking neural networks, and what are the benefits of doing so?

2. How does the NSNN model compare to deterministic SNNs in terms of performance and robustness, and what are the implications of these findings for machine learning and computational neuroscience?

ScienceCast board

1. How does the NSNN model incorporate noise into spiking neural networks, and what are the benefits of doing so?

2. How does the NSNN model compare to deterministic SNNs in terms of performance and robustness, and what are the implications of these findings for machine learning and computational neuroscience?

ScienceCast board