Learning Compact Neural Networks with Deep Overparameterised Multitask Learning

Learning Compact Neural Networks with Deep Overparameterised Multitask Learning

Shen Ren, Haosen Shi

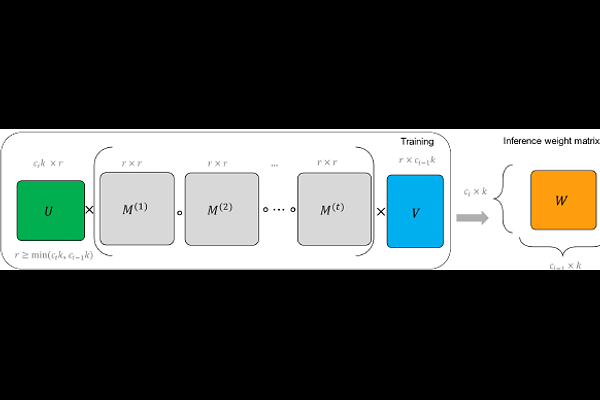

AbstractCompact neural network offers many benefits for real-world applications. However, it is usually challenging to train the compact neural networks with small parameter sizes and low computational costs to achieve the same or better model performance compared to more complex and powerful architecture. This is particularly true for multitask learning, with different tasks competing for resources. We present a simple, efficient and effective multitask learning overparameterisation neural network design by overparameterising the model architecture in training and sharing the overparameterised model parameters more effectively across tasks, for better optimisation and generalisation. Experiments on two challenging multitask datasets (NYUv2 and COCO) demonstrate the effectiveness of the proposed method across various convolutional networks and parameter sizes.