Optimization dependent generalization bound for ReLU networks based on sensitivity in the tangent bundle

Optimization dependent generalization bound for ReLU networks based on sensitivity in the tangent bundle

Dániel Rácz, Mihály Petreczky, András Csertán, Bálint Daróczy

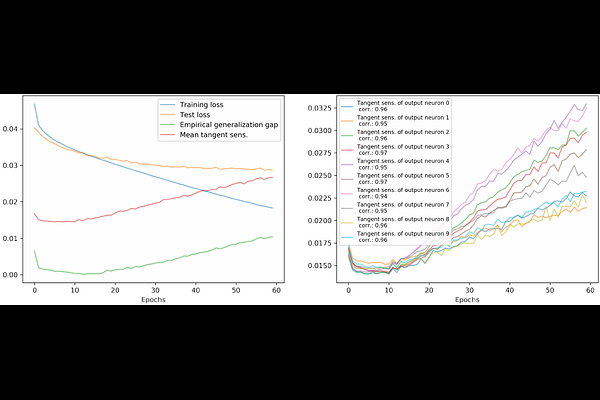

AbstractRecent advances in deep learning have given us some very promising results on the generalization ability of deep neural networks, however literature still lacks a comprehensive theory explaining why heavily over-parametrized models are able to generalize well while fitting the training data. In this paper we propose a PAC type bound on the generalization error of feedforward ReLU networks via estimating the Rademacher complexity of the set of networks available from an initial parameter vector via gradient descent. The key idea is to bound the sensitivity of the network's gradient to perturbation of the input data along the optimization trajectory. The obtained bound does not explicitly depend on the depth of the network. Our results are experimentally verified on the MNIST and CIFAR-10 datasets.