A pragmatic workflow for research software engineering in computational science

A pragmatic workflow for research software engineering in computational science

Tomislav Marić, Dennis Gläser, Jan-Patrick Lehr, Ioannis Papagiannidis, Benjamin Lambie, Christian Bischof, Dieter Bothe

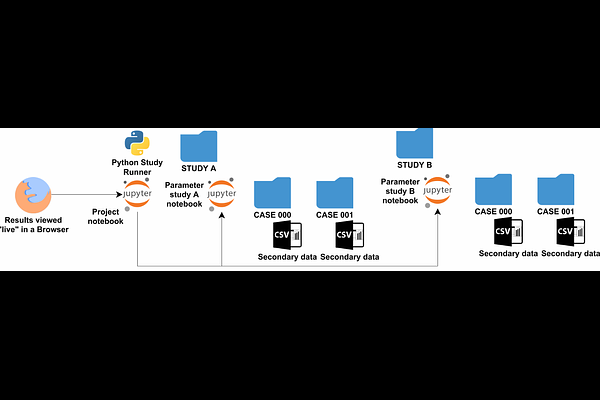

AbstractUniversity research groups in Computational Science and Engineering (CSE) generally lack dedicated funding and personnel for Research Software Engineering (RSE), which, combined with the pressure to maximize the number of scientific publications, shifts the focus away from sustainable research software development and reproducible results. The neglect of RSE in CSE at University research groups negatively impacts the scientific output: research data - including research software - related to a CSE publication cannot be found, reproduced, or re-used, different ideas are not combined easily into new ideas, and published methods must very often be re-implemented to be investigated further. This slows down CSE research significantly, resulting in considerable losses in time and, consequentially, public funding. We propose a RSE workflow for Computational Science and Engineering (CSE) that addresses these challenges, that improves the quality of research output in CSE. Our workflow applies established software engineering practices adapted for CSE: software testing, result visualization, and periodical cross-linking of software with reports/publications and data, timed by milestones in the scientific publication process. The workflow introduces minimal work overhead, crucial for university research groups, and delivers modular and tested software linked to publications whose results can easily be reproduced. We define research software quality from a perspective of a pragmatic researcher: the ability to quickly find the publication, data, and software related to a published research idea, quickly reproduce results, understand or re-use a CSE method, and finally extend the method with new research ideas.