Long-Range Correlation Supervision for Land-Cover Classification from Remote Sensing Images

Long-Range Correlation Supervision for Land-Cover Classification from Remote Sensing Images

Dawen Yu, Shunping Ji

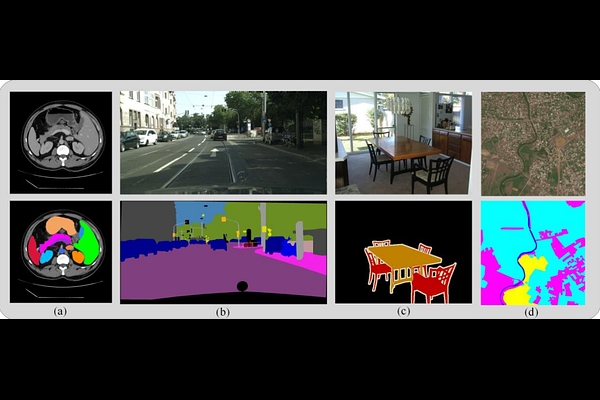

AbstractLong-range dependency modeling has been widely considered in modern deep learning based semantic segmentation methods, especially those designed for large-size remote sensing images, to compensate the intrinsic locality of standard convolutions. However, in previous studies, the long-range dependency, modeled with an attention mechanism or transformer model, has been based on unsupervised learning, instead of explicit supervision from the objective ground truth. In this paper, we propose a novel supervised long-range correlation method for land-cover classification, called the supervised long-range correlation network (SLCNet), which is shown to be superior to the currently used unsupervised strategies. In SLCNet, pixels sharing the same category are considered highly correlated and those having different categories are less relevant, which can be easily supervised by the category consistency information available in the ground truth semantic segmentation map. Under such supervision, the recalibrated features are more consistent for pixels of the same category and more discriminative for pixels of other categories, regardless of their proximity. To complement the detailed information lacking in the global long-range correlation, we introduce an auxiliary adaptive receptive field feature extraction module, parallel to the long-range correlation module in the encoder, to capture finely detailed feature representations for multi-size objects in multi-scale remote sensing images. In addition, we apply multi-scale side-output supervision and a hybrid loss function as local and global constraints to further boost the segmentation accuracy. Experiments were conducted on three remote sensing datasets. Compared with the advanced segmentation methods from the computer vision, medicine, and remote sensing communities, the SLCNet achieved a state-of-the-art performance on all the datasets.