AdaPlus: Integrating Nesterov Momentum and Precise Stepsize Adjustment on AdamW Basis

AdaPlus: Integrating Nesterov Momentum and Precise Stepsize Adjustment on AdamW Basis

Lei Guan

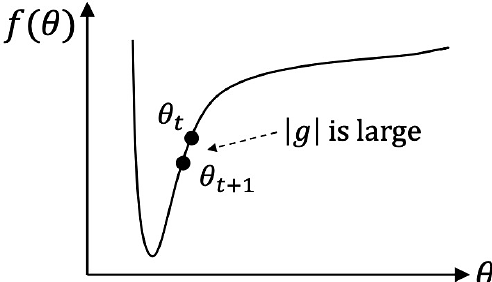

AbstractThis paper proposes an efficient optimizer called AdaPlus which integrates Nesterov momentum and precise stepsize adjustment on AdamW basis. AdaPlus combines the advantages of AdamW, Nadam, and AdaBelief and, in particular, does not introduce any extra hyper-parameters. We perform extensive experimental evaluations on three machine learning tasks to validate the effectiveness of AdaPlus. The experiment results validate that AdaPlus (i) is the best adaptive method which performs most comparable with (even slightly better than) SGD with momentum on image classification tasks and (ii) outperforms other state-of-the-art optimizers on language modeling tasks and illustrates the highest stability when training GANs. The experiment code of AdaPlus is available at: https://github.com/guanleics/AdaPlus.