Improving Zero-shot Reader by Reducing Distractions from Irrelevant Documents in Open-Domain Question Answering

Improving Zero-shot Reader by Reducing Distractions from Irrelevant Documents in Open-Domain Question Answering

Sukmin Cho, Jeong yeon Seo, Soyeong Jeong, Jong C. Park

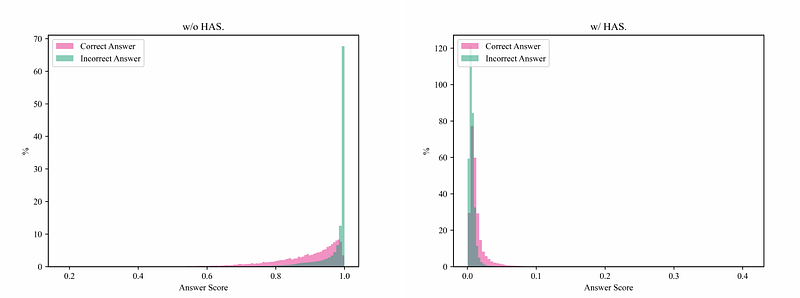

AbstractLarge language models (LLMs) enable zero-shot approaches in open-domain question answering (ODQA), yet with limited advancements as the reader is compared to the retriever. This study aims at the feasibility of a zero-shot reader that addresses the challenges of computational cost and the need for labeled data. We find that LLMs are distracted due to irrelevant documents in the retrieved set and the overconfidence of the generated answers when they are exploited as zero-shot readers. To tackle these problems, we mitigate the impact of such documents via Distraction-aware Answer Selection (DAS) with a negation-based instruction and score adjustment for proper answer selection. Experimental results show that our approach successfully handles distraction across diverse scenarios, enhancing the performance of zero-shot readers. Furthermore, unlike supervised readers struggling with unseen data, zero-shot readers demonstrate outstanding transferability without any training.