Filling in the Gaps: Efficient Event Coreference Resolution using Graph Autoencoder Networks

Filling in the Gaps: Efficient Event Coreference Resolution using Graph Autoencoder Networks

Loic De Langhe, Orphée De Clercq, Veronique Hoste

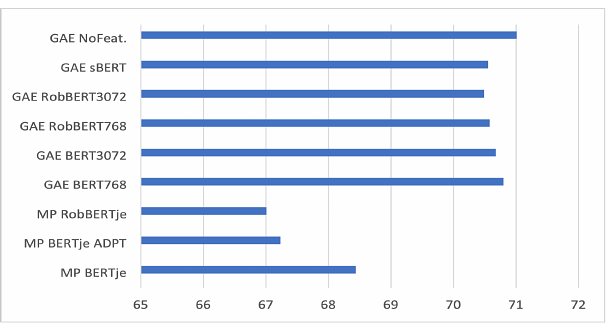

AbstractWe introduce a novel and efficient method for Event Coreference Resolution (ECR) applied to a lower-resourced language domain. By framing ECR as a graph reconstruction task, we are able to combine deep semantic embeddings with structural coreference chain knowledge to create a parameter-efficient family of Graph Autoencoder models (GAE). Our method significantly outperforms classical mention-pair methods on a large Dutch event coreference corpus in terms of overall score, efficiency and training speed. Additionally, we show that our models are consistently able to classify more difficult coreference links and are far more robust in low-data settings when compared to transformer-based mention-pair coreference algorithms.