ACT-SQL: In-Context Learning for Text-to-SQL with Automatically-Generated Chain-of-Thought

ACT-SQL: In-Context Learning for Text-to-SQL with Automatically-Generated Chain-of-Thought

Hanchong Zhang, Ruisheng Cao, Lu Chen, Hongshen Xu, Kai Yu

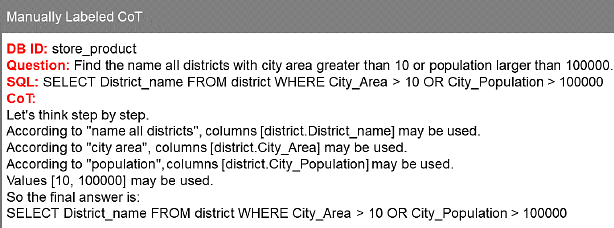

AbstractRecently Large Language Models (LLMs) have been proven to have strong abilities in various domains and tasks. We study the problem of prompt designing in the text-to-SQL task and attempt to improve the LLMs' reasoning ability when generating SQL queries. Besides the trivial few-shot in-context learning setting, we design our chain-of-thought (CoT) prompt with a similar method to schema linking. We provide a method named ACT-SQL to automatically generate auto-CoT exemplars and thus the whole process doesn't need manual labeling. Our approach is cost-saving since we only use the LLMs' API call once when generating one SQL query. Furthermore, we extend our in-context learning method to the multi-turn text-to-SQL task. The experiment results show that the LLMs' performance can benefit from our ACT-SQL approach. Our approach achieves SOTA performance on the Spider dev set among existing in-context learning approaches.